Fix Flaky Tests and Ship with Fewer Bugs

Eliminate flaky test failures with time travel debugging

I can’t reproduce the issue. When I run it on my machine, it works.

Sound familiar?

We have a solution.

Flaky tests are a sign of structural weakness

Intermittent or nondeterministic test failures, also known as flaky tests, are some of the hardest to reproduce – especially in large, multithreaded codebases. As a result, a lot of them remain unresolved.

Answer this, though. If you smelled smoke in your house, would you ignore it? Or would you investigate the root cause?

The root cause of flaky tests could either be due to:

- Non-deterministic behavior in the test themselves.

- Non-determinism in the software under test – usually caused by multithreading issues as that introduces non-determinism.

And the negative impact of leaving a flaky test problem unaddressed is significant.

Codebase stability

Flaky tests directly undermine codebase stability by introducing uncertainty and unreliability into the very system meant to safeguard it: the test suite. With flaky tests, engineers can’t say with confidence that a build is stable.

When tests fail randomly, developers start ignoring test failures because “they’re probably just flaky”; so real bugs slip through into production. The risk of shipping bugs is very high.

Over time, undiscovered regressions accumulate and weaken the codebase – leading to an unstable codebase.

Developer productivity killer

Flaky tests also slow down delivery and delay releases.

When they are not ignored, flaky test failures trigger wasted engineering time through:

- test re-runs

- debugging sessions

- slack threads

And most of that engineering time is spent on trying to reproduce the problem.

Best practices for reliably reproducing flaky tests

If you have known instability in the codebase, Undo can help with its time travel debugging solution.

Here are the best practices we’ve seen implemented in the field:

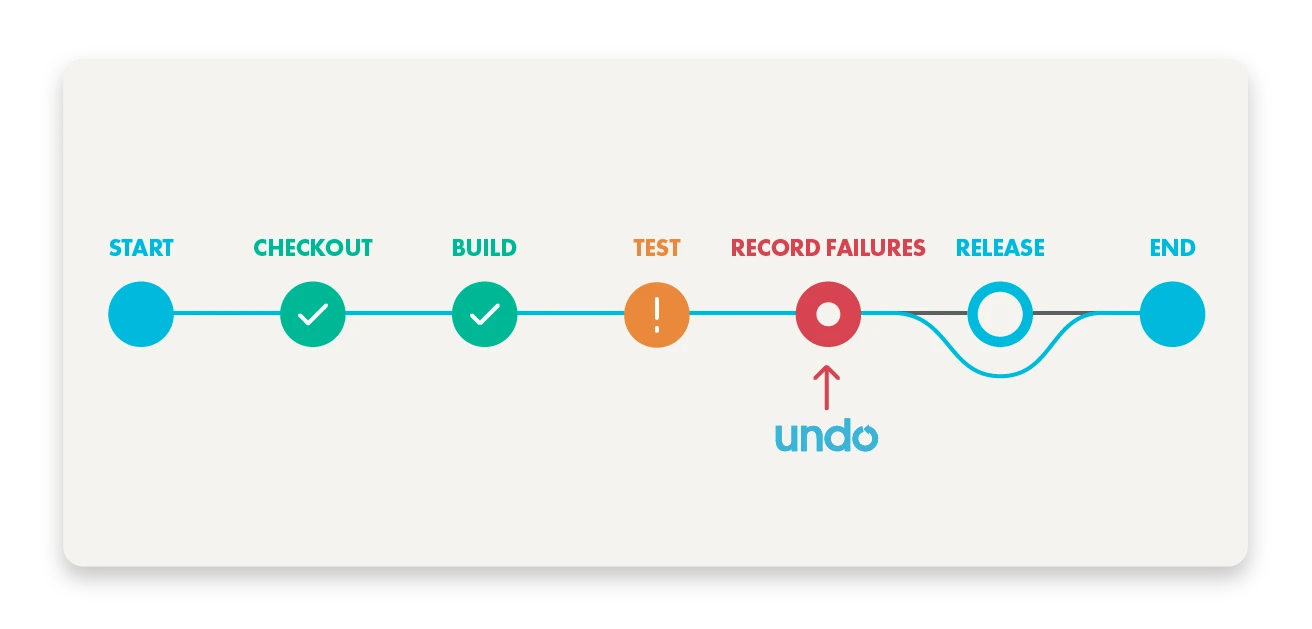

- Integrate Undo into your CI / Build System. Configure it so that when a test fails, it automatically re-runs the test under recording, capturing the bug in the recording file.

- Send a ticket to the team whose test failed – attaching the recording to the ticket.

- The engineer simply debugs the recording file. No more “works on my machine”!

Undo decouples test execution from diagnosis:

- Capture a flaky test run in CI or a distributed test farm.

- Analyze it later, offline, without needing to rerun or re-trigger the flake.

This debugging workflow saves weeks of developer time per year.

Since multithreading issues are a common culprit for flaky tests, you can also re-run the test (in step 1) using Undo’s thread fuzzing capability. Thread fuzzing is used to shake the CPU scheduler and quickly expose hidden race conditions.

“Undo and its directed thread fuzzing capability has become essential for debugging our heavily multithreaded codebase and making sure we identify and fix known bugs during development – before they get anywhere near customers.”

David – Software Engineer at a leading networking company [Read the full story]

We had written a unity test that stress-tested a newly added piece of concurrent code and there was some issue we couldn’t understand. We couldn’t reproduce the issue at first, but using Undo with thread fuzzing turned on, we were able to reproduce the issue within minutes.

Andreas Erz, Software Developer at SAP [Read the full interview]

Learn more about how SAP HANA’s engineering team is able to deliver faster, higher-quality releases at scale:

Strategies for managing flaky tests in CI pipeline

Some engineering teams decide to take flaky tests out of their automated test suite into quarantine. For example, if it fails 3 times within 2 weeks, it’s a flaky test and it gets pulled out of the delivery pipeline and added to a ‘sporadic tests farm’. Only when that test has proved itself stable, will it be reintroduced into the delivery pipeline. Although test coverage is temporarily lost, this quarantining strategy prevents disruption to the CI/CD pipeline.

As part of the quarantining, Undo can be used to continuously run the tests in the sporadic tests farm in a loop, under recording until they fail. Once they fail under recording, engineers have everything they need inside those recordings to quickly diagnose the problem.

Top tip: run the tests repeatedly and record as much as you can (product code and test code).

Or, if preferable, intermittently failing tests can be tagged as such and always run under recording while leaving them in the main suite so that coverage is not lost. When the tests fail again, you then have a recording.

Release with confidence: ship with fewer bugs and ensure stability at scale

Now you know it’s possible to reliably reproduce flaky tests, uncover root causes in an afternoon, and maintain stable releases. And it is possible to do this without complex setup, or needing to use up significant engineering effort to replace all your synchronization logic with special synchronization logic, recompile with all sorts of special flags, and without getting lots of false positives.

Want to explore how Undo could help you fix your flaky tests problem?

FAQs

Flaky tests are tests that sometimes pass and sometimes fail without any changes to the codebase – they’re unreliable, inconsistent, and hard to trust.

Flaky tests often fail inconsistently because of timing issues, race conditions, or external dependencies. Undo lets you:

- Record the execution of a flaky test once (in CI or locally).

- Replay it deterministically, step-by-step, with full visibility into memory, threads, variables, and execution flow.

- Rewind and debug backwards in execution time, to see what happened and why.

✅ Result: You can reproduce the flake every time. No need to “wait for it to happen again”

Undo works with normal releases without any code or build changes, so you don’t need to modify your code and you need to recompile.

ThreadSanitizer detects data races directly, which is effective if the program omits to check the consistency of its own data structures.

Thread Fuzzing varies the scheduling of threads, which can be effective when a data race occurs only under unusual conditions, and produces an Undo recording that makes it possible to reproduce the failure in a debugger, which is effective when the cause of a race is complex.