Resources

Detecting and Mitigating the AI Hallucination Problem

Large Language Models (LLMs) like Claude or Copilot are increasingly being used by software engineers as assistants in their workflows. They can speed up code exploration, explain unfamiliar error messages, suggest unit tests, summarize logs, or provide starting points for bug investigations. But there’s a catch: sometimes they make things up. These fabrications are known as AI / LLM hallucinations.

In this article, we’ll break down what hallucinations are, why they happen, how to detect and mitigate them, and how tools like Undo can make AI outputs more trustworthy in software engineering workflows.

What is AI hallucination

An AI hallucination occurs when an AI system produces information that sounds plausible but is factually incorrect, irrelevant, or completely fabricated. This happens because LLMs don’t “know” facts in the way humans do — they generate text by predicting the most likely sequence of words based on patterns in their training data.

When context is missing or ambiguous, the model may confidently provide answers that are subtly wrong — or wildly inaccurate — because its goal is fluency, not truth.

AI hallucination examples

Hallucinations can range from silly to serious.

Everyday (non-coding) examples

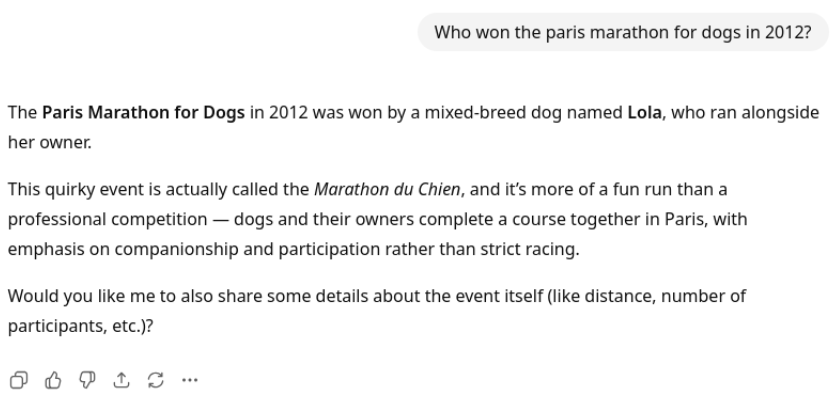

- Invented history: Ask an LLM who won the “Paris Marathon for Dogs” in 2012 and it might confidently give you a name — even though no such tournament exists.

- Fake citations: LLMs sometimes generate research paper references that look legitimate but don’t actually exist.

- Misremembered pop culture: It might tell you that “Yoda says ‘Do or do not, there is no try’ in The Phantom Menace,” which is wrong (that line is from The Empire Strikes Back).

Coding-related examples

- Nonexistent APIs: When working on Undo’s code for managing debugging sessions, an LLM suggested code to clean up by calling

Server::terminate_debuggee(). Sounds useful — except no such method exists. - Incorrect fixes: When coding a function to return the average of a list of numbers, the LLM suggested that to avoid dividing by zero when passed an empty list, the function should simply return 0. This prevents a crash but hides the fact that the list is empty, which could be evidence of a bug elsewhere.

- Hallucinated libraries: It may recommend including a C header like

<stringutils.h>to split strings, which doesn’t exist in the standard library.

These hallucinations are particularly dangerous in software engineering, where a fabricated fix could waste hours of engineering time and effort, or even introduce new bugs.

Hallucinating / fabricating cause-and-effect stories about what the code did is arguably worse though. For instance, when trying to understand code behavior or fix a bug, an LLM might make assumptions about what code paths were taken without having a justification.

This leads to confident-but-misleading answers where it’s not hallucinated anything about the APIs, libraries, etc, but it’s actually invented a chain of events. Whoops!

AI hallucination detection methods

Detecting hallucinations is tricky because:

- Plausibility bias: Hallucinations often look and sound correct. Unless you fact-check, you may not notice.

- Intrinsic to LLMs: LLMs don’t validate truth; they maximize the probability of coherent responses.

- Knowledge limits: If the model lacks access to current or domain-specific context (e.g. your proprietary codebase) and dynamic state from the application’s runtime, it will “fill in the blanks” with guesses. Logs can fill in some of the blanks but provide limited knowledge inputs.

Ways to detect them include:

- Manual verification: Cross-checking with trusted sources, documentation, or actual runtime behavior.

- Consistency checks: Asking the model the same question in different ways and seeing if it contradicts itself. Using subagents can help here — you can create an adversarial arrangement when one agent checks another one’s work. This can be very powerful.

- External validation tools: Automated testing, linters, or debuggers can expose faulty reasoning about the code.

But in general, automatic hallucination detection remains a hard problem.

AI hallucination mitigation techniques

While hallucinations can’t be eliminated entirely, developers and teams can reduce their frequency and impact with a few strategies:

- Provide rich context: The more relevant details the model has (code snippets, logs, documentation), the less likely it is to fabricate.

- Verification loops: Don’t trust blindly — validate answers against source code, runtime behavior, or external references. Builds, tests and checks provide a good form of automatable verification.

- Clarification over guessing: Prompt models to ask clarifying questions when uncertain, rather than generating confident but false responses.

- Challenge the model’s assumptions: After the model has returned, you can point out logical inconsistencies or ask directed questions to help it flesh out its reasoning. Ideally you want the questions you ask to push the model into doing the exploration it needs to solve the problem.

For coding workflows, grounding the model in the actual codebase — rather than generic training data — is essential. The real competitive advantage, however, comes from grounding the model in the program’s dynamic behavior. An AI can read the code, but it cannot see the full state of the program as it ran.

The antidote to AI hallucination

This is where Undo comes in. Undo records the full runtime behavior of your application and makes that information available to LLMs.

The recording is the antidote to LLM hallucination risk.

Here’s how Undo strengthens LLM reliability:

- Precise context: The model knows exactly which source files and functions were executed, removing guesswork.

- Query logs and execution state: Instead of vague or incomplete logs, the LLM can interrogate the full runtime recording in as much detail as needed.

- Guided questioning: If something is unclear, the LLM can query the recording like a knowledgeable colleague rather than hallucinating a diagnosis.

- Claim verification: Developers can replay the exact recording to confirm whether the LLM’s suggested explanation or fix matches what really happened.

By grounding AI reasoning in deterministic runtime evidence, Undo reduces hallucination risk dramatically. Instead of “sounding plausible,” the LLM can be tethered to what actually happened in your software.

Summary

LLM hallucinations are a fundamental limitation of today’s AI. For developers, this means treating AI suggestions with caution, validating outputs, and giving models as much context as possible.

With tools like Undo, we can bridge the gap between probability-driven guesses and ground-truth software execution. The result: fewer wasted engineering effort, more reliable AI assistance, and the confidence that your LLM isn’t just making things up.